I am obsessed with

Hatsune Miku.

A quick history.

Back in 2000, Yamaha started developing

vocaloid technology-- a synthesizer aimed at recreating a singing voice. By mid-2000s they technology had been encapsulated in two "virtual soul vocalists", Leon and Lola. What's interesting here is that almost immediately upon creating a singing synthesizer it was

personalized into a fictional entity.

Crypton Media created their first vocaloid, Meiko, in 2004. Hatsune Miku was release as the first of a "character vocal series" in 2007. Her name roughly translates to "first future sound." There is imagery for her: big anime eyes, blue hair that's about four feet long. Her voice was synthesized from sampling the voice of

Saki Fujita. She's targeted to be a young teen and her voice was modified to fit that role.

Okay. We know how fictional entities can take Japan (and the US) by storm. Think Pokemon. Think Yugioh. But there aren't very many "live" concerts for Pokemon. There has been for Miku.

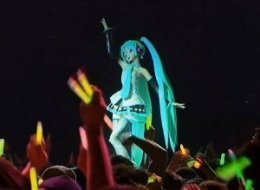

Starting in 2009 Miku performed on stage with a live band by projecting an animated image on a scrim.

This is only the start of what's interesting. Think of the technology. Was Miku rendered, recorded and projected and the band kept up like in silent movies? Was she pre-rendered and then the performance locked into the band's work? Was there real time rendering involved? I have no idea.

Think of the music. Enjoyable pop, by and large. There have literally thousands of songs written for Miku as the vocaloid technology is readily available. How were the songs chosen? Is this crowdsourcing of vocal material? Have we reached the age where pop songs can literally grow from the grass roots up?

Think of the celebrity phenomena. Miku, and her three "costars", play for a live audience sold out for months in advance. People paying top dollar for a concert given by a fictional creation.

But for our purposes today I'm just going to talk about the interaction between Miku and her audience.

Humans are tokenized communicators. We don't exchange real things; we exchanged symbolic tokens. Even real objects are imbued with layers and layers of symbolism. If I'm in the garden working with two trowels and you are next to me digging with your fingers, I might give you the second trowel. That simple act has a wealth of information associated with it. By doing so I've acknowledged you have a lack. I've recognized your need and moved to fill it. I've stated willingness to share. And you, as the recipient, instantly grasp this information. All by the movement of the token trowel.

Of course, humans build tokens out of other tokens, have multilayered levels of communication, deceive, obfuscate and otherwise mess with communication. I could make a strong argument that everything we do, everything that makes us human, has something to do with this tokenized communication with each other. It's what we do best. I'd hazard a guess that this ability has been selected for and is one of the reasons we've evolved this oversized brain of ours.

Terry Winograd and

Fernando Flores wrote

Understanding Computers and Cognition, one of the most brilliant discussion of human communication I've ever read. I haven't found much discussion of it on the net but there's a good article

here that talks about it.

One of the points they made was that many of the mechanisms we take for granted are in fact tokenized communications between the developer of the mechanism and the user. If you use a microwave oven the way the oven works, how to set the time, the power, what power settings are available, the shielding, are in fact a model of how the oven should work. A finite set of people have communicated to you, the user, that model.

A modern three minute song consists of hook, melody, various bridges and chorus. It's a simple model of a complex phenomena. However, the modern song itself is a simplification of earlier song forms that go back hundreds of years. Regardless, the song is a collection of tokens that have been grouped together into a single entity that is intended to convey a communication to the listener.

Music binds time: it has a beginning, a middle and an end. It is repeatable-- and not. Is the experience of hearing a song the first time the same as the second? The fiftieth? How about comparing a studio recording with a live performance? We recognize the song is the same even though we also recognize it is different. Music evokes tremendous activity all the way from time perception and auditory processing to activating memory and emotion and higher cognition. That's a lot of activity caused by a three minute sound.

Miku is a performance organizing principle on stage. The band members are fully human and they play right along side. They are, in fact, virtuoso musicians. My son pointed out that Miku was nothing without a good band behind her. I pointed out that's true of most pop singers.

In the most recent vocaloid concert (video

here.), Miku is brought back for an encore and seems to stop and look away as if overcome with emotion. (See

here, about 4:23.) The audience loves it.

So, what is Hatsune Miku?

She's not an Artificial Intelligence. Not that there's not a lot of intelligence in her software but she's no AI. Someone programmed that behavior into her. Someone made a communication to the audience with Miku's actions as the token.

Alan Turing, and many of the early computer scientists, were concerned with computer consciousness. How would they ever tell? Turing devised what has become known as the

Turing Test. The idea of the Turing Test was you take a teletype in a room and use it as a conversing medium to something connected to an entity out of sight. If the entity is indistinguishable from a human being it is, in effect, human regardless as to whether it is in silicon or not.

I've never liked the Turing Test for a lot of reasons. For one reason, it essentially says we can't define consciousness but we, as humans, know it intrinsically. So we'll use that innate knowledge to recognize it. This is weak analysis. It's analogous to those that say, I can't define pornography but I know it when I see it. It may or may not be true but it isn't much in the way of science.

It also presupposes we can in fact do that recognition. Humans project their own qualities willy nilly on the world. We push human qualities to the universe, our pets, dolphins, elephants, lizards, rocks and the sun. Why do we think we'd do any better with a teletype?

It also presupposes a particular kind of consciousness and intelligence: human. Other species might fail the test and still have intelligence and consciousness.

Finally, it is prone to failure on its own merits. If some genius writes a program that can mimic a human interaction over a teletype-- some super Watson, for example-- does that my definition conscious? Think spam bots. Some of them are pretty damned good-- a limited venue, to be sure. But though we're not in the country of such computer programs, we can see it from where we stand.

If we substitute a song for a teletype, does Miku pass the Turing Test? Let's push further into the music. Miku fronts for the band but she doesn't interact with it. She can't depart onto an unrehearsed solo and have the band twig to when she hands it off. Almost any garage band can do that and some of the best bands, like

The Who or

Iron Butterfly, could make whole concerts out of handing improvisations one member to another. A whole field of music, jazz, was in part founded on it.

So, let's say future Miku improvements do allow her to act independently within this musical Turing Test. Would her passing define her to be conscious and intelligent?

I would say consciousness is not proven but intelligence is. Like the teletype program I suggested before, within that particular and narrow venue Miku would be every bit as intelligent as a human. Consciousness implies self-awareness of the experience and I don't think that's proven by the Turing Test in any way.

But, returning to Winograd and Flores, any object we create can (and likely is) a token of communication between people. Miku, as she now stands, is a communication mechanism of the song to the audience. The song is a creation of a set of individuals: the members of the band, the composer, the arranger, the programmer that taught Miku the song and the animator that produced her visualization.

Since I'm obsessed with her, I'll say the communication is good.

We had a pretty bad storm over the weekend. For our part, we lost some parts of trees but not much in the way of property damage. We didn't lose power. I have friends whose power is out and have no visible sign of relief.

We had a pretty bad storm over the weekend. For our part, we lost some parts of trees but not much in the way of property damage. We didn't lose power. I have friends whose power is out and have no visible sign of relief.